TL;DR

- Data quality is crucial for effective lead databases and marketing automation.

- Automate data deduplication to avoid manual errors and enhance consistency.

- Normalize geographical and company name data to support targeted campaigns and clarify sales pipelines.

- Infer missing geographical data using known relationships to fill gaps efficiently.

- Segment leads using job titles to improve campaign precision and engagement.

- Adopt data automation strategies carefully by classifying data, outlining transformations, and scheduling updates.

- Continuous advancement in automation and data science enables better data handling and utilisation in enterprise marketing.

In the case of database administration, some most important aspects are data collection, data cleansing, and the choice of database technologies for storage and retrieval. When it comes to creating a lead database, for example, it may be full of holes. There may be duplicate records existing, incomplete data, and a lack of segmentation in a raw lead database. The poor quality of data makes it difficult to plan for targeted campaigns or user engagement activities. Also, with a compromised lead database, you cannot use any tools for marketing automation.

Smart Tips to improve Database Entries!

Many marketers now think that the only way to make their database efficient is to invest further to have a data vendor clean it further. While trying to get the data validated and enriched by a vendor is an ideal approach to consider, you may also try to improve the same by keeping your data clean using some automation solutions. This article will discuss some smart tips to improve the quality of your database entries by investing your time effectively in the data automation process, which will make the most laborious data cleansing tasks easier.

1. De-duplication of data

Marketers hesitate to automate the deduplication process but prefer to eyeball the duplicates and merge those manually with the feat of eliminating any valuable records. If this manual merging process follows a consistent logic, it can easily be automated. If the logic to merge includes various arbitrary judgments, then the results of manual de-duplication across a huge database may not be any better than automation tools.

2. Normalizing the geographical data

Without normalized and complete geodata, it may be difficult for marketers to run any regional campaigns or plan for any events and route their leads accordingly. Building a geographically segmented lead database will become difficult when you search for possible typos or misspelled entries. At a minimum, you need to normalize the geographical entries like states, provinces, countries, etc. For example, instead of "Massachusetts," you can use "MA," but it should be standardized that way and followed strictly. For standardization of datasets in an enterprise database, you may rely on the services offered by providers like RemoteDBA.

3. Inferring missing geographical data

Some of the data fields have known relationships among themselves, so having a certain number may let you infer the missing data. For example, instead of simply struggling with the incomplete address data, you can get the system to fill the blanks to leverage the known relationships automatically. Let us see an example.

- If the ZIP code is 94065, it can infer the related city as 'Redwood City' and California's corresponding state.

- In another case, if the country code is given as 353, which can be inferred as 'Ireland.

4. Normalization of company names

Another major problem with lead database management is the entry of non-standardized names of companies. In B2B lead databases, there may be names of the same company which can have different entries like "Toyota Inc. USA" or "Toyota Motor USA," or just "Toyota Motors," etc. It isn't easy to normalize the company names in lead databases. So, instead of taking up this tedious task of normalizing company names, you can focus on building target accounts and customer lists. Efficiently cleaning these critical records may offer greater clarity to your sales pipeline report and help fine-tune your marketing metrics. The best strategy for finding an effective database for sales leads is using comparison guides on the internet that help highlight each database's unique features and capabilities, making your search much easier.

5. Data Management Statistics

Here we've accumulated an amassed hit-rundown of the best-showcasing information the executive's measurements to assist you with uncovering industry patterns in a steadily evolving space.

We've included general promoting information, the board details just as measurements on information quality, information security consistence details, figures on information penetrate expenses and that's just the beginning…

6. Segmenting using the job titles

As we can assume, a well-segmented database will act as the foundation of effective personal engagement and targeted campaigns. It includes effective segmentation of the job function, level of job, size of the organization, industry, and job location. Common data services can enrich the generic data. However, to be more effective, segmentation needs to show ways to sell and to whom you sell. For a marketer, it is important to know the leads in IT are good enough to use. For others, there may be other considerations too such as security, networking, or engineering, etc. A largely available source of segmented data is job titles. With the proper mapping of the title keywords, it is easy to segment leads based on job level and function. Here is an example.

- Accounts Payable may indicate the job function under the sector 'Finance'

- "CFO" or "Managing Director" may be under the job level of 'Executive' and so on.

All these five quick tips will help you improve your lead database quality without the need to spend a fortune on it. All that you need is to spend a few hours with reliable data automation tools. There are few things you need to know while adopting data automation strategies as below.

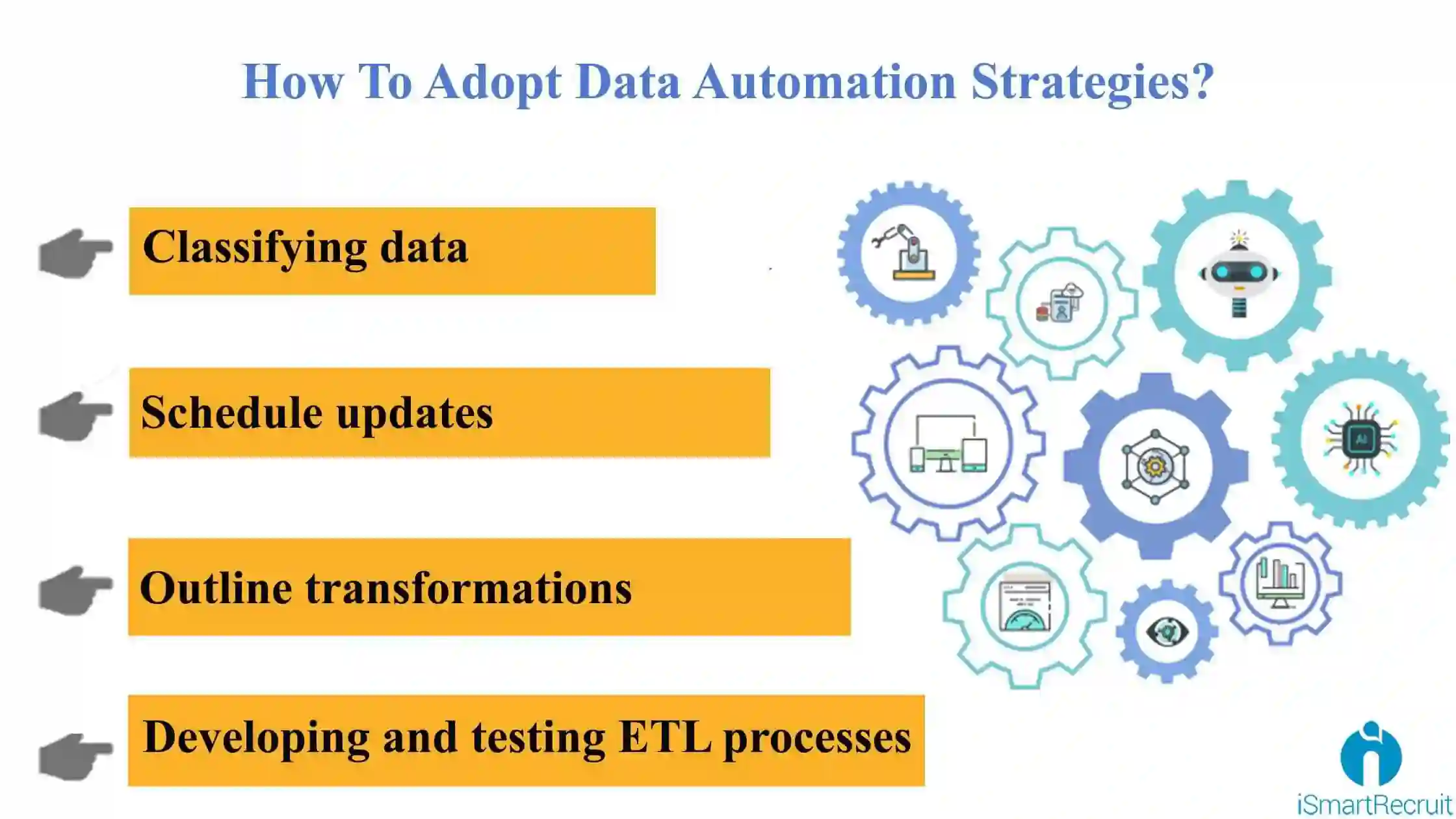

How To Adopt Data Automation Strategies?

Classifying data

The initial step for data automation is to categorize the data sources according to their priority and ease of access. If you use an automated tool for data extraction, ensure that it supports all the crucial formats to your operations.

Outline transformations

The next step in automation involves identifying the essential transformations to convert data sources into the desired size. For example, it may be easy to convert the acronyms to full-text names or transform relational database data into CSV files. Different database platforms require different tools and techniques for automation. Optimizing MariaDB differs from tweaking MySQL or Excel, so you need to customize your approach.

Developing and testing ETL processes

Based on the requirements outlined above, it is important to select a good ETL tool and reverse ETL tool that features all the essentials to process and update data by retaining quality.

Schedule updates

The last but important step is to schedule all the time updates for data. It is also recommended to use a good ETL tool, which can process the automation features like workflow automation and job scheduling etc. It will also ensure the process execution without the need for manual intervention.

Database for Enterprise Marketing in Nutshell

With the advancement in data automation, data science and machine learning models are evolving day by day. Even though automated feature engineering is new, it comes with the potential to solve many data science projects with real-world data sets. When you have efficient people handling your data you can do wonders with them. Of course, you need to ensure that the data remains safe at all times.

FAQs - Frequently Asked Questions

What is the importance of data deduplication in lead databases?

Data deduplication prevents duplicate records, which can skew marketing results and waste resources. Automating this process with tools like iSmartRecruit ensures consistency and accuracy without manual errors.

How can geographical data normalization benefit marketing campaigns?

Normalizing geographical data helps run targeted regional campaigns by standardising location details. This ensures your leads are grouped correctly, improving engagement and conversion rates.

Why should company names be normalized in a B2B database?

Standardising company names avoids confusion caused by varied entries. It clarifies your sales pipeline and marketing metrics. iSmartRecruit can assist by providing reliable data management solutions.

What role does segmentation by job title play in lead management?

Segmenting by job title allows precise targeting based on job function and level. This helps marketers tailor campaigns effectively, resulting in better engagement and higher ROI.